How Agentic AI Prevents Fraud in Financial Services

Key Takeaways

- Agentic AI monitors transactions in real time and spots suspicious behavior instantly.

- Uses multi-model reasoning to detect anomalies more accurately.

- Triggers automatic countermeasures like account freezes or step-up authentication.

- Learns continuously from every resolved case without waiting for human input.

- Detects new and evolving fraud patterns (deepfakes, synthetic IDs, AI-generated takeovers).

- Completes end-to-end fraud investigations in under 50 ms, far faster than rules-based systems.

The Arms Race Has Shifted

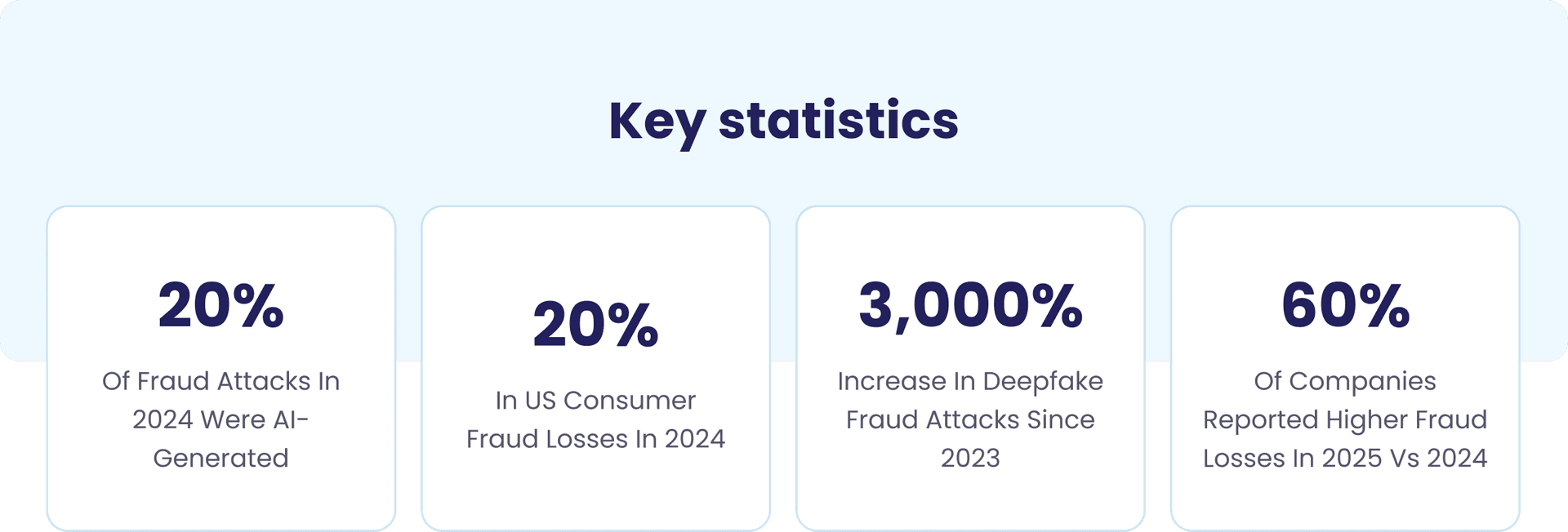

Fraud has gone autonomous. Criminals no longer need a team of operators manually crafting phishing emails or testing stolen card numbers one by one. In 2024, financial institutions saw a 25% year over year increase in intrusion events, driven in part by AI enhanced phishing, synthetic identity fraud, and automated website cloning kits used to mimic banking reports.

The institutional response, however, has largely not kept pace. Most fraud operations still rely on static rule thresholds, batch-scored transaction logs, and analyst queues that can take hours or days to clear. These systems were designed for a world where fraud moved at human speed. That world no longer exists.

The scale of the problem is stark. Consumers and businesses lost more than $12.5 billion to fraud in the United States alone in 2024, according to FTC data — a 25% jump from the year prior. Globally, Nasdaq's 2024 Financial Crime Report placed combined fraud and money laundering losses at an estimated $485.6 billion. And Experian's 2026 Fraud Forecast found that nearly 60% of companies reported an increase in fraud losses between 2024 and 2025.

The answer is not more analysts, more rules, or faster rule updates, but a fundamentally different architecture that fights autonomous fraud with autonomous defense. That architecture is agentic AI.

This playbook covers everything a CTO needs to understand: the technical distinction between agentic AI and conventional machine learning, the exact decision flow during a real-time fraud event, sector-specific implementations for banking, insurance, and payments, governance requirements for responsible deployment, and the measurable outcomes institutions can expect.

It also explains how xLoop builds these systems for financial institutions operating in complex regulatory environments.

Why Has Traditional Fraud Detection Stopped Working?

To understand the value of agentic AI, you first need to understand the structural failure modes of the systems it replaces. Legacy fraud detection is not simply slower than modern threats, it is architecturally incapable of addressing them.

What Are the Core Limitations of Rules-Based Fraud Systems?

Rules-based systems operate on a simple principle: define conditions that indicate fraud, and flag any transaction that meets those conditions. This model has four fundamental weaknesses that compound as fraud sophistication increases.

First, fraudsters learn the rules. Static thresholds become public knowledge within fraud communities. Attackers calibrate their behavior to stay just below trigger points, splitting payments, spacing them across accounts, and rotating payees to avoid detection.

Second, batch processing introduces fatal lag. Many institutions still score transactions after the fact rather than in-flight. In a world of real-time payment rails — where funds are irreversible within seconds — a detection window of hours or days is not slow: it is functionally useless. McKinsey found that even existing AI tools, when constrained by human sign-off at each step, fail to transform results because the bottleneck is not computation — it is process.

Third, alert fatigue destroys analyst effectiveness. Broad rules that catch some real fraud also generate enormous numbers of false positives. Compliance teams spend 60–80% of their time clearing false alarms, leaving capacity for genuine investigations severely compressed. False positives consume a substantial portion of analyst time, with some security teams spending up to 75% of their day on alerts that require no action.

Fourth, siloed data creates blind spots. AML, fraud, KYC, and payments systems in most institutions do not share signals in real time. A transaction monitoring system may not know that the same customer just failed biometric verification, or that the destination account appeared in an AML flag three days ago. Each system sees a fragment of the picture. Agentic AI sees all of it simultaneously.

How Are Fraudsters Now Using AI Against Financial Institutions?

The threat landscape in 2025 is categorically different from the environment that shaped most institutions' fraud defenses. Key AI-driven attack vectors:

- Synthetic identity fraud at scale. Generative AI enables the mass production of convincing composite identities. North America saw a 311% increase in synthetic identity document fraud in Q1’24 to Q2’25. Datos Insights cautions that GenAI-powered synthetic identities and deepfakes can bypass biometric verification systems.

- AI-generated deepfakes for account takeover and authorization.Voice and video deepfakes impersonate account holders during phone verification and pass liveness checks at onboarding. Deepfake fraud increased by 3,000% since 2023, with AI-driven deepfake attacks occurring every five minutes globally. Secondary analyses suggest human detection of high-quality deepfake video can be as low as 25%.

- Agentic fraud bots. Autonomous AI agents probe an institution's defenses methodically — testing which transaction patterns trigger alerts, which communication approaches succeed with specific victim profiles, and which account types are most vulnerable. These systems operate 24/7 without fatigue, adapting their tactics based on real-time feedback.

- Emotionally intelligent scam bots. GenAI-powered bots conduct complex romance fraud and relative-in-need scams, building trust over extended periods and responding convincingly to skeptical questions.

- Website cloning at industrial scale. AI tools automate fraudulent site creation, enabling fraudsters to spin up convincing bank portal clones faster than takedown requests can eliminate them.

Key insight for CTOs

The institutions most exposed are not those with weak security teams — they are those whose architecture assumes fraud operates at human speed. Every system that requires a human approval step before action can be taken creates a window that automated fraud exploits by design.

What Exactly Is Agentic AI And How Does It Differ From Conventional ML?

Before evaluating agentic AI for fraud prevention, technical leaders need a precise definition. "Agentic AI" is not a marketing term for better machine learning — it describes a categorically different architectural model that enables autonomous multi-step action.

What Makes an AI System Truly 'Agentic'?

- 01Autonomy: Acts without continuous human instruction. Sets sub-goals and executes multi-step plans to resolve them — the system decides what to investigate next based on what it has already found, not on a pre-written script.

- 02Perception: Ingests multimodal data streams simultaneously: transactions, behavioral biometrics, device fingerprints, geolocation, voice patterns, social signals, and external threat intelligence feeds. No silos; all signals processed as a unified context.

- 03Reasoning: Applies LLM-level reasoning to interpret ambiguous or novel signals. Rather than matching against a known-fraud library, the system evaluates whether a pattern is consistent with legitimate behavior, forms hypotheses, and tests them even for attack vectors it has never seen before.

- 04Tool use: Calls external APIs, databases, and verification services in real time, sanctions lists, identity vaults, credit bureau, law enforcement alerts, assembling evidence across the institution's and third parties' data ecosystem.

- 05Memory and learning: Maintains case history and refines detection thresholds based on outcomes. Each confirmed fraud case or cleared false positive feeds directly back into the model — the system becomes more accurate with every investigation it handles.

- 06Multi-agent orchestration: Specialist sub-agents — transaction monitor, identity verifier, behavioral analyst, AML screener, case narrator, compliance reporter — operate in parallel under a planner agent that routes signals, aggregates findings, and makes the final decision.

How Does Agentic AI Compare To Traditional & Generative AI In Fraud Prevention?

We’ve summarized some key capability differences below.

| Key capability | Traditional / Rules-Based ML | Agentic AI |

|---|---|---|

| Detection speed | Hours to days (batch scoring) | Milliseconds (real-time, continuous) |

| Adaptability | Manual rule updates required | Self-learning; updates on new fraud signals continuously |

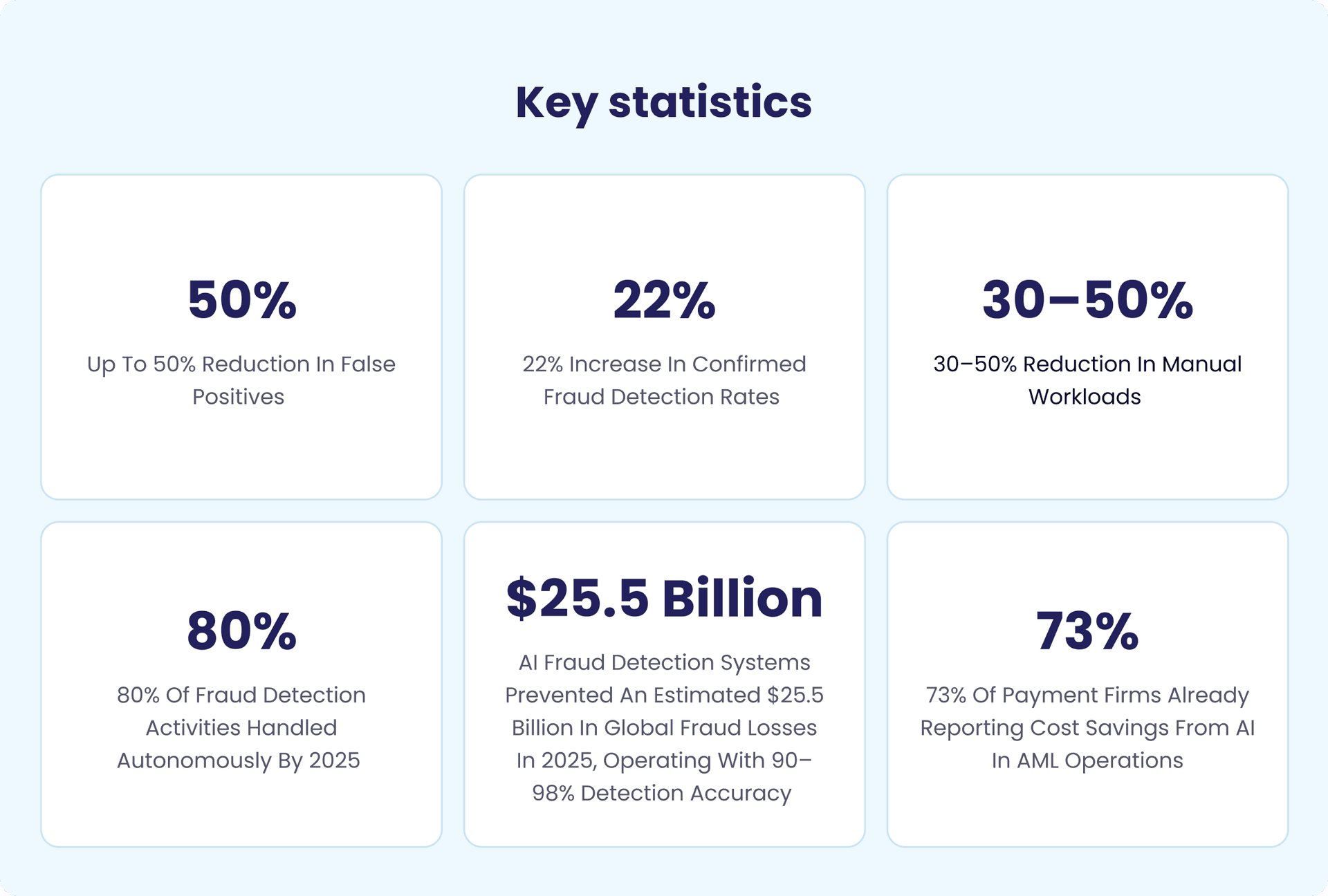

| False positive rate | High, broad rule triggers | Reduced by up to 50–60% via behavioural profiling |

| Novel fraud types (deepfakes, synthetic IDs) | Often missed until manual review | Detected via multimodal cross-referencing and reasoning |

| Investigation workflow | Manual analyst-heavy; hours to days | Autonomous case triage and narrative generation in seconds |

| Regulatory explainability | Limited, black-box model outputs | Full audit trail with structured reasoning chains |

| Scale | Linear cost growth with volume | Scales to millions of daily transactions at flat cost |

| Human-in-the-loop | Required for most decisions | Escalation-only for edge cases and high-stakes decisions |

What Does the Architecture of an Agentic Fraud AI System Look Like?

A production-ready agentic fraud system comprises five interconnected layers:

- Data ingestion layer. Real-time event streams — transactions, login events, device signals — combined with batch enrichment from bureau data, third-party identity providers, and external threat feeds. Historical case data available as contextual memory.

- Orchestration layer (Planner Agent). The central coordinator. Routes events to appropriate specialist agents based on signal type, risk score, and investigation state.

- Specialist agents. Each optimized for a specific investigative domain: Transaction Monitor Agent, Identity Verification Agent, Behavioral Biometrics Agent, AML/Sanctions Screening Agent, Network Analysis Agent, and Investigative Narrative Agent.

- Decision layer. Autonomous approve / flag / block / escalate within pre-defined governance boundaries. High-confidence decisions are executed automatically; edge cases and vulnerable-customer scenarios escalated with full evidence brief.

- Audit and explainability layer. Every decision logged with its complete reasoning chain — what signals were observed, how they were weighted, what action was taken, and why. The regulatory compliance backbone of the system.

The Real-Time Fraud Prevention Playbook - What Actually Happens When A Threat Occurs?

What Happens in the First 50 Milliseconds of a Suspicious Transaction?

The entire investigation — signal ingestion, parallel agent analysis, identity verification, AML cross-referencing, autonomous decision, and regulatory case documentation — completes before a human would have finished reading the transaction details:

Transaction event published to the agent orchestration queue. Planner Agent instantiated with full transaction context: amount, payee, channel, device fingerprint, geolocation, and session metadata.

Planner routes concurrently to Transaction Monitor Agent and Behavioral Bio metrics Agent. Both begin processing simultaneously — no sequential queuing.

Transaction Monitor scores against 200+ dynamic risk signals — velocity across accounts, geographic inconsistencies, device reputation, payment amount patterns against historical baseline, payee relationship history, time-of-day behavioral norms.

Behavioral Bio metrics Agent compares current session behavior (typing rhythm, scroll speed, mouse movement, touch pressure) against the enrolled user's baseline. Mid-session behavior changes flag account takeover even after successful authentication.

If aggregate risk score exceeds dynamic threshold — Identity Verification Agent initiates step-up authentication. Simultaneously, AML/Sanctions Agent cross-references destination account against sanctions lists, PEP databases, watch lists, and known mule account indicators.

Planner Agent aggregates all findings and makes the autonomous decision: Approve (low risk), Soft-block (step-up auth required), Hard-block (high-confidence fraud), or Escalate(novel pattern, vulnerable customer, or high-value threshold breach).

Investigative Narrative Agent auto-generates a structured case summary — signals observed, reasoning chain, action taken — ready for regulatory submission without any manual input.

Decision delivered to the customer-facing system. Legitimate transactions proceedwithout friction. Suspicious transactions blocked or challenged.

Aveni reports a global bank cut false alarms by 60% and increased confirmed fraud detections by 22% after deploying agentic AI.

How Does Agentic AI Handle Novel Fraud Patterns It Has Never Seen Before?

Rather than matching against a known-fraud library, the agentic reasoning layer generates hypotheses. When a sequence of events does not match any learned pattern but produces anomalous signal combinations, the Reasoning Agent forms a hypothesis — "this sequence resembles a structured layering pattern not in training data" — and tests it by pulling additional context from external threat intelligence and recent case history.

Critically, novel patterns with high uncertainty are not silently missed. They are escalated to human analysts with a full evidence brief. And once the analyst confirms or clears the case, that decision feeds directly back into model refinement, tightening detection on the new vector for all future transactions.

How Does the System Balance Speed Against Customer Experience?

False positives have a cost beyond wasted analyst time: they frustrate legitimate customers, damage trust, and drive attrition.

A well-architected agentic fraud system is as focused on minimizing unnecessary friction for good customers as it is on stopping bad ones. This is achieved through:

- Dynamic threshold calibration. Risk thresholds adjust per customer segment, transaction type, channel, and time of day, so familiar payment patterns face a lower intervention bar than first-time international transfers to new payees.

- Tiered response. Low-risk anomalies trigger silent monitoring; medium-risk trigger friction (biometric step-up, not account freeze); only high-confidence fraud triggers a hard block.

- Customer communication agents. Automated, context-aware outreach for flagged transactions preserves the customer relationship without requiring a human agent to make the call.

Sector Playbooks for Banking, Insurance, & Payments

Fraud in banking is not the same as fraud in insurance, which is not the same as fraud in payments. Each sector has distinct fraud typologies, different regulatory obligations, and different tolerances for false positives. A generic agentic AI system that ignores these distinctions will underperform. Here is how the architecture and emphasis shift across the three sectors.

Banking: How Do Agentic AI Systems Stop Account Takeover and APP Scams in Real Time?

Banking fraud in 2025 is dominated by two vectors that conventional systems handle poorly: account takeover (ATO) via session hijacking and credential stuffing, and authorized push payment (APP) scams where victims are socially engineered into transferring funds themselves. UK Finance reports £1.17 billion total fraud losses in 2024, including £450.7 million in APP fraud

Agentic AI addresses ATO through continuous session behavioral monitoring that persists beyond the login event. A fraudster who has stolen valid credentials and passed multi-factor authentication will still behave differently from the legitimate account holder — different typing cadence, different navigation patterns, different device characteristics. The Behavioral Biometrics Agent flags these session-level discrepancies and triggers re-verification before a payment is initiated, not after.

APP scam detection requires recognizing social engineering patterns in payment context. Agentic AI identifies the combination of signals that correlate with APP scam victimization — a new payee the account holder has never paid before, receiving an unusually large amount, with the transfer initiated unusually quickly — and triggers a contextual warning that asks the customer specific questions about the transfer. The agent does not simply decline the payment; it engages in a targeted intervention.

Synthetic identity fraud at onboarding is addressed by a coordinated agent pipeline running document liveness detection, behavioral biometrics during the application, bureau cross-referencing, and network analysis as a unified workflow — not sequential checks that can be passed one at a time.

Real-world reference

- HSBC's AI-driven ‘Dynamic Risk Assessment’ platform demonstrates core agentic characteristics: it updates its detection logic continuously as fraud tactics change, producing a material reduction in false positive alerts.

- JP Morgan and Bank of America both announced agentic AI fraud workflow deployments in 2025. DBS Bank publicly outlined governance for agentic AI in the same year, and highlighted fraud detection as a core opportunity area.

Insurance: How Can Agentic AI Detect Fraudulent Claims Before They're Paid?

Insurance fraud costs the an estimated $80 billion annually, in the USA. In the UK, the ABI uncovered 98,400 fraudulent claims in 2024 — a 12% increase from 2023 — with a total value of £1.1 billion. Agentic AI addresses this through three complementary capabilities:

- Claims Triage Agent. Scores every incoming claim for fraud risk using 300+ signals — claim timing relative to policy inception, claimant history across policies, repair shop and medical provider relationships, solicitor involvement patterns, and claim characteristics that statistically correlate with staged events.

- Document Analysis Agent. Reads supporting documentation with LLM-level comprehension — medical reports, police reports, invoices, weather data — identifying inconsistencies that human reviewers miss: timestamps that contradict each other, injury descriptions inconsistent with the reported mechanism, medical reports using boilerplate language associated with known fraud farms.

- Network Analysis Agent. Maps relationships between claimants, solicitors, repair garages, and medical providers — identifying organized fraud rings invisible to any single-claim investigation but visible as network patterns across the claims portfolio.

Real-world reference

Allianz launched its first integrated agentic AIsystem, built and deployed in under 100 days.

Project Nemo uses seven specialized agents to automate food spoilage claims below AUD$500 during natural catastrophe events. Results: 80% reduction in processing time, full 7-agent workflow executing in under 5 minutes, claims resolved in hours vs. prior processing of days. Maria Janssen, Chief Transformation Officer at Allianz Services: "With Project Nemo as our first integrated agentic AI solution, we're achieving an impressive 80% reduction in claim processing and settlement time."

Payments: How Does Agentic AI Protect Real-Time Payment Rails from Fraud at Scale?

Payments fraud has a defining characteristic that sets it apart: irreversibility. On real-time payment rails — FedNow, SEPA Instant, UPI, Pix — once a fraudulent payment is executed, recovery is extremely difficult. Detection must happen before authorization. There is no remediation window.

The emerging complication in payments is agentic commerce: the growing use of AI agents authorized to initiate transactions on behalf of human users. Javelin Strategy and Research identifies this as a new

frontier requiring different risk frameworks for agent-initiated payments, binding agent credentials to verified user accounts, and different authorization rules when the initiating identity is non-human.

- Transaction Graph Agent. Analyses full payment network topology in real time — detects money mule networks and velocity patterns across millions of accounts simultaneously.

- Dynamic Authorization Thresholds. Agents simulate fraud scenarios per second and calibrate approval thresholds without interrupting legitimate flows.

- ISO 20022 Enrichment. Richer payment data structures enable more precise risk signals for agent reasoning.

Market context:

In 2025, the payments fraud landscape became significantly more sophisticated, with The Pay pers noting a sharp rise in AI-driven fraud and deepfake-enabled attacks. Meanwhile, research from Hawk.ai and Chart is shows that payments and fintech firms are scaling AI at speed: 94% plan to increase AI investments, and emerging Gen AI/agentic AI systems are already improving detection accuracy and investigation efficiency.

73% of payment firms already report cost savings from AI in AML operations; 42% project savings exceeding $5 million annually within 12 months.

What Does Responsible Deployment Of Agentic Fraud AI Look Like?

For any CTO in a regulated financial institution, the governance question is equally important as the capability question. Deploying an autonomous system that makes consequential decisions about customer accounts without an adequate governance framework creates regulatory and reputational exposure that exceeds the fraud risk it is meant to address.

How Do You Ensure Agentic AI Fraud Decisions Are Explainable to Regulators?

Explainability is a first-principle design requirement, not a feature to retrofit. Every autonomous decision must produce an auditable reasoning chain that documents what signals were observed, how they were weighted, what action was taken, and why — in human-readable form suitable for regulatory submission.

This matters practically for SAR (Suspicious Activity Report) generation. 90% of banking executives rank SAR narrative drafting among their top five AI use cases — because the Investigative Narrative Agent capability of a well-built agentic system can draft complete, regulatorily compliant SAR narratives from case evidence automatically.

Regulatory frameworks to design against: the FCA's APP fraud reimbursement rules, DORA (EU operational resilience and AI accountability), and SR 11-7 (US Fed and OCC guidance on model risk management). An agentic system without an explainability layer cannot meet any of these frameworks.

Where Should Human Oversight Remain in an Agentic Fraud System?

Full autonomy is neither technically appropriate nor regulatorily defensible for all fraud decisions. Scenarios that should always involve a human include:

- Interactions with potentially vulnerable customers (elderly customers, those showing signs of coercion, accounts with vulnerability markers)

- Investigations with geopolitical or reputational complexity exceeding the system's defined authority

- Sanctions designations, which carry direct legal liability

- Cases involving amounts above defined high-value thresholds where the consequences of an error are significant

The crucial design principle: when an agentic system escalates to a human, it delivers a complete, structured investigation brief with all evidence assembled — not a generic alert that creates more work for the analyst.

How Do You Prevent the Agentic AI System Itself from Being Compromised?

- Pre-deployment red teaming. Adversarial testing of agent pipelines against prompt injection, data poisoning, and coordinated probing sequences before any production deployment.

- Input sanitization. All external data ingested by agents — customer-supplied documents, transaction metadata, third-party API responses — sanitized before processing to prevent prompt injection via data payloads.

- Privilege isolation. Agents operate within least-privilege boundaries. No individual agent has unconstrained system access.

- Model drift monitoring. Continuous monitoring for distributional shift that could indicate evolving fraud tactics or system degradation, with automated alerts when drift exceeds defined thresholds.

xLoop’s Approach To Deploying Agentic Fraud AI

The gap between a compelling agentic AI demonstration and a production system that reliably catches fraud, meets regulatory requirements, and integrates with an existing financial services technology stack is significant.

What Should Financial Institutions Do Before Deploying Agentic Fraud AI?

Five pre-conditions must be in place before any agentic system goes into production:

- Data infrastructure readiness. Clean, connected, real-time data pipelines are prerequisites — batch data delivered hours late, siloed between systems, or inconsistently formatted will produce unreliable outputs regardless of AI sophistication.

- Legacy system integration mapping. Identify precisely where agent outputs will feed into existing fraud operations platforms, core banking, case management, and compliance systems. Agentic AI should enhance what exists, not require a full technology re-platform.

- Use-case prioritization. Start with the highest-impact, most data-rich fraud type your institution faces. Prove ROI clearly before scaling to full coverage. The Allianz approach — scoping Project Nemo to a single, well-defined claim type — is a model worth following.

- Governance framework definition. Human-in-the-loop boundaries, escalation protocols, audit log requirements, and regulatory documentation standards must all be defined before the first agent decides.

- Team upskilling and role redesign. Fraud analysts shift from alert triaging to edge-case adjudication, AI performance oversight, and escalation handling — requiring training and role redesign that should begin well before deployment.

What Makes XLoop's Approach to Agentic Fraud AI Different?

- Purpose-built for financial services fraud.

- Multi-agent architecture with defined agent specialization.

- Explainability as a first-principles design requirement.

- Integration-native deployment

- Sector depth and proven deployment experience. Production experience across banking, insurance, and payments with deep understanding of each sector's distinct fraud typologies and regulatory context.

What Measurable Outcomes Should Financial Institutions Expect?

Benchmarks from production deployments:

The compounding advantage:

Institutions that deploy agentic fraud AI now build detection models on their own institutional data — transaction histories, confirmed fraud cases, customer behavioral baselines — that competitors cannot replicate later. Every resolved case makes the system more accurate. The performance gap widens continuously.

Conclusion: If Fraud Moves At Machine Speed, Shouldn’t Your Defense?

Agentic AI is already transforming fraud prevention. Institutions that have embraced these systems are seeing tangible improvements: faster investigative cycles, sharper detection, fewer false alarms, and operational workflows that simply were not achievable with traditional tools.

The alternative path is increasingly untenable. Fraud patterns are evolving rapidly, fueled by generative technologies that can mimic identities, fabricate documents, and overwhelm legacy controls at speeds human‑centered processes cannot match. Threat actors are moving autonomously, escalating their sophistication, and exploiting every gap that slower, manual systems leave exposed.

For technology and fraud leaders, the real question is whether their institution will be among the early builders who secure the learning advantage, forge governance structures, and accumulate the proprietary intelligence that compounds over time; or among the late adopters who deploy only when competitive pressure, regulatory expectations, or loss exposure leave no alternative.

Early movers gain more than improved protection today. They establish feedback loops that continuously refine their models, building unique institutional intelligence that compounds with every case resolved — an advantage that organizations adopting later cannot easily catch up to.

Ready to Make Fraud Prevention Autonomous?

Connect with our team to discover how purpose-built AI agents detect, investigate, and neutralize fraud for your organization.

FAQs

Frequently Asked Questions

Table of Contents

Newsletter Signup

Tomorrow's Tech & Leadership Insights in

Your Inbox

Discover New Ideas

Rethinking Loan Operations: How AI Agents Are Accelerating Approval Cycles

AI Document Processing ROI: How Mid-Market Companies Are Cutting Processing Time by 60% (And What It Costs to Wait)

Is Your AI Actually Secure? What Enterprise Leaders Need to Know in 2026

Knowledge Hub